Agent S2: An Open,

Modular, and Scalable Framework for Computer Use Agents

Computer-use agentsare autonomous AI agents that observe, reason, and perform tasks on behalf of human users, by directly interacting with graphical user interfaces (GUI), including desktops, mobile devices, browsers, and various softwares. They function as intelligent intermediaries between human users and their digital tools in the most intuitive way—mouse and keyboard control, just like a human. This human-like ability to navigate and control software marks a foundational leap in AI, setting the stage for the next era of technological progress powered by autonomous computer-use agents.

Today, we're excited to announce our next leap forward in computer-use agents: Agent S2, the second generation of our agentic framework. Building on our initial successes, Agent S2 offers even greater performance and modularity by leveraging both frontier foundation models and specialized models. Agent S2 achieves new state-of-the-art results, scales well with more steps, and most importantly, it is fully open!

State-of-the-Art Performance

Agent S2 demonstrates superior computer and phone use, seen by significant advancements across key benchmark challenges.

For computer use, Agent S2 delivers State-of-the-art results on OSWorld on both 15-step and 50-step evaluations (two most practical settings for real-world usage), proving that our agentic framework takes more precise actions and generates the best plan for a task, while being able to correct itself and improve over a long horizon. Notably, Agent S2 achieves 34.5% accuracy on 50-step evaluation, surpassing the previous SOTA (OpenAI CUA/Operator at 32.6%), demonstrating how agentic frameworks can scale beyond a single trained model.

For smartphone use, Agent S2 achieves 50% accuracy on AndroidWorld, surpassing previous SOTA (UI-TARS at 46.8%), demonstrating the generalization of agentic frameworks across different visual UI environments.

Subsequent to this blog post, we achieved stronger results on AndroidWorld while preparing our paper. We have updated this table to reflect the latest performance. Please refer to the paper for comprehensive details.

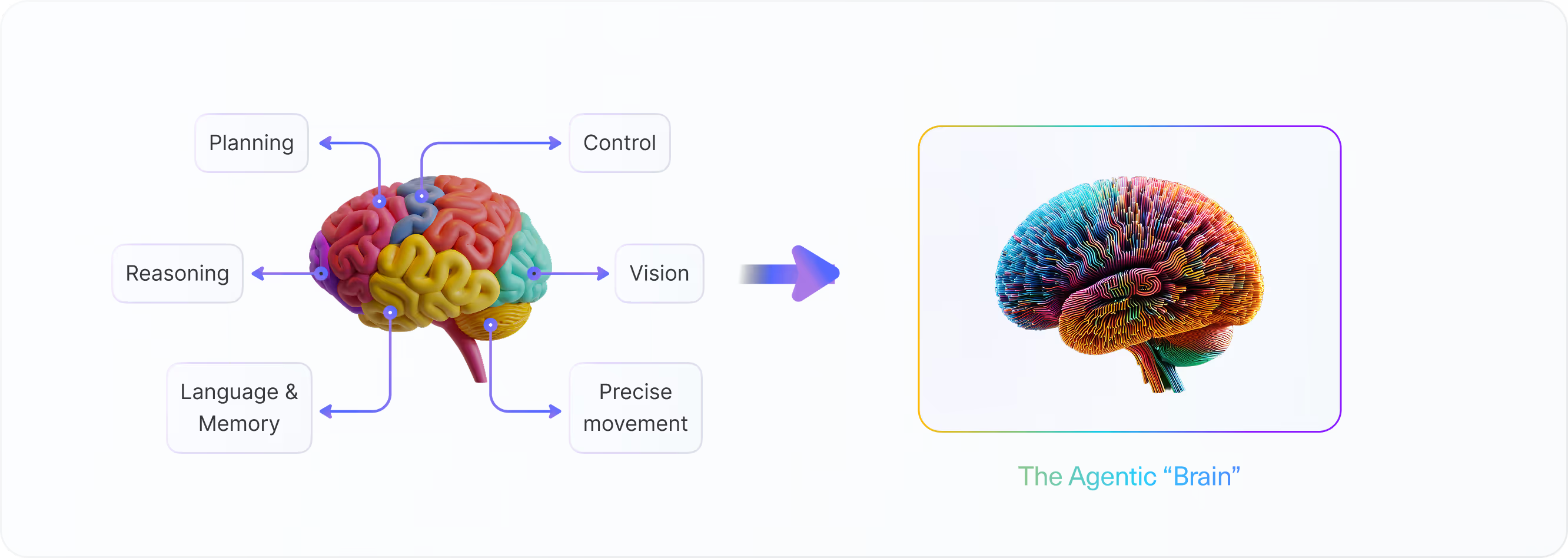

Why Modular Frameworks Matter: Inspiration from Human Brain

The human brain is a remarkable example of modular design—a network of specialized components working in unison. Different regions excel at distinct tasks: the left hemisphere drives analytical thinking, the right fuels creativity, while motor and sensory areas manage physical coordination. This modular structure, optimized for collaboration, inspires how we approach AI agent design for computer use.

At Simular, we believe the most effective AI agents should follow a similar principle—modular frameworks that seamlessly orchestrate diverse models, rather than relying on a single monolithic system. Our initial agent framework, Agent S, launched on October 11, 2024, embodies this vision. With experience-augmented hierarchical planning as the core, Agent S achieved better overall performance than any models and frameworks at the time.

Our latest research further shows that a well-designed modular framework, even with suboptimal individual models, can outperform the best standalone model. Why? Because different models excel in different areas, each possessing unique strengths and weaknesses. A robust framework optimizes the orchestration among these modules, ensuring that each model contributes where it performs best, leading to superior overall outcomes. In the rapidly evolving landscape of foundation models, modularity is key. Our next generation agentic framework, Agent S2, achieves significantly better perception, planning and fine-grained control by virtue of its improved modularity and flexibility.

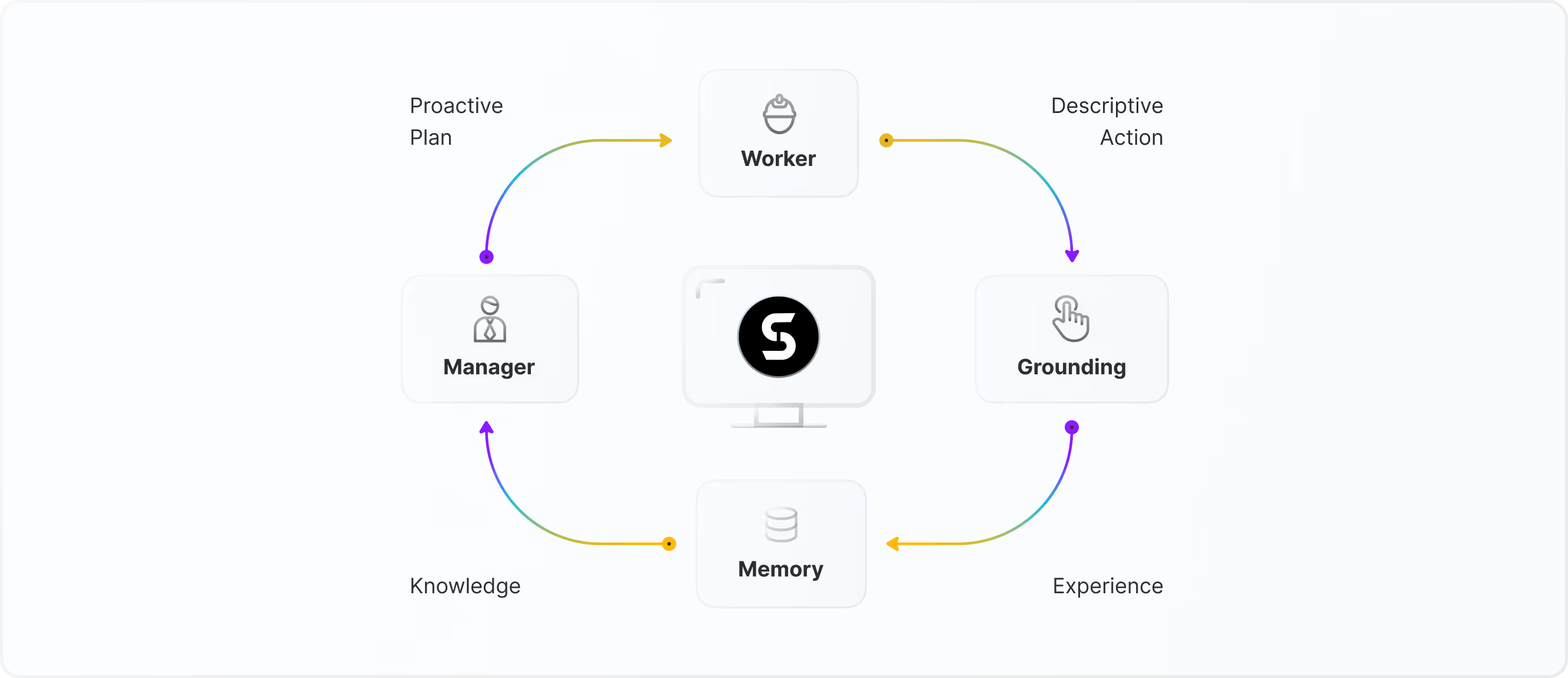

Agent S2: How it works

Agent S2 is built to handle complex digital tasks through a modular and scalable approach. Its framework emphasizes four key design principles:

Proactive Hierarchical Planning

Agent S2 follows a natural task hierarchy, combining specialized models for low-level execution with generalized models for high-level planning. Low-level tasks, such as UI element selection or text highlighting, demand high precision and domain-specific expertise, while high-level tasks require broader adaptability and strategic oversight. In addition, a key advancement in Agent S2 is its shift from reactive to proactive planning. Instead of replanning only after encountering errors, which would require more steps to backtrack and may accrue more error, Agent S2 dynamically updates its plans after each subtask. This proactive approach improves adaptability to real-time changes, continuity from one subtask to the next, and optimality of future steps.

Visual Grounding for Precise Interaction

Agent S2 achieves high-precision interaction with graphical user interfaces (GUIs) through specialized visual grounding models. Unlike its predecessor, which relied on accessibility trees for UI understanding, Agent S2 operates solely on raw screenshots as input, eliminating the need for structured accessibility data. By delegating visual understanding to dedicated models, Agent S2 can accurately locate and manipulate UI elements like buttons, text, images, and cells—enabling fine-grained control that was previously limited by accessibility constraints.

Agent-Computer Interface with Expert Modules

Agent S2 improves its Agent-Computer Interface (ACI) by offloading complex, low-level tasks such as text highlighting to specialized expert modules. This reduces the cognitive load on the foundation models, allowing them to focus solely on high-level planning and strategic decision-making.

Agentic Memory Mechanism

Agent S2 uses a continual learning memory mechanism that allows it to evolve with experience, improving efficiency over time. The experience from previously completed tasks are retained, allowing Agent S2 to recall prior actions and refine future strategies based on historical successes and failures. This adaptive learning capability enables Agent S2 to become more proficient with each application, creating a foundation for long-term adaptive intelligence and personalized automation.

This modular architecture also makes scaling and adaptation effortless. New modules powered by foundation or expert models can be easily integrated, removed, or swapped, allowing Agent S2 to rapidly adapt to new task domains with ease.

Agent S2 in Action

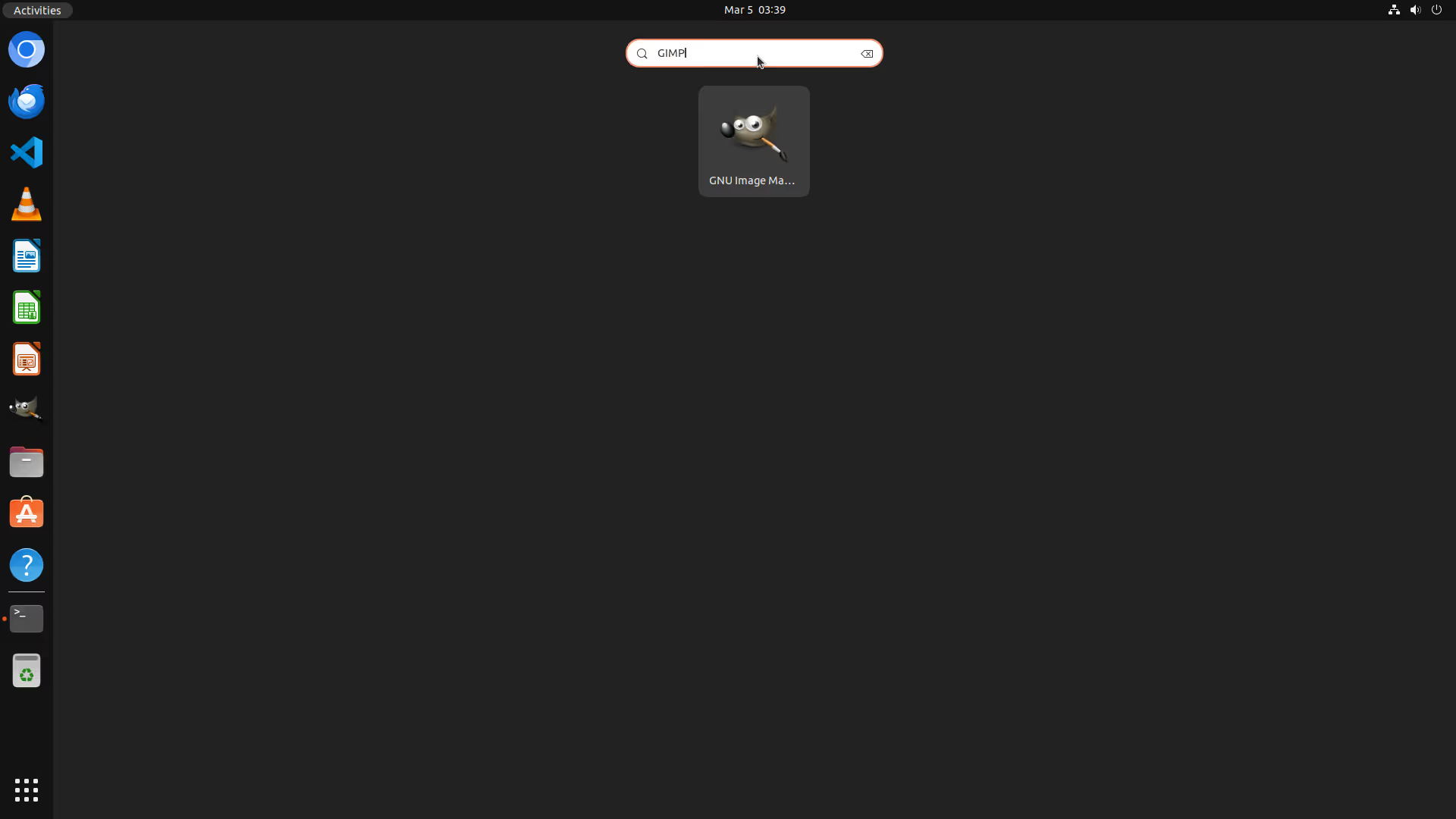

Computer Use

Download an image from Google Drive and use GIMP to compress it

Copy Image Into Doc

Copy an image from GIMP to a LibreOffice Writer doc, and then export the doc

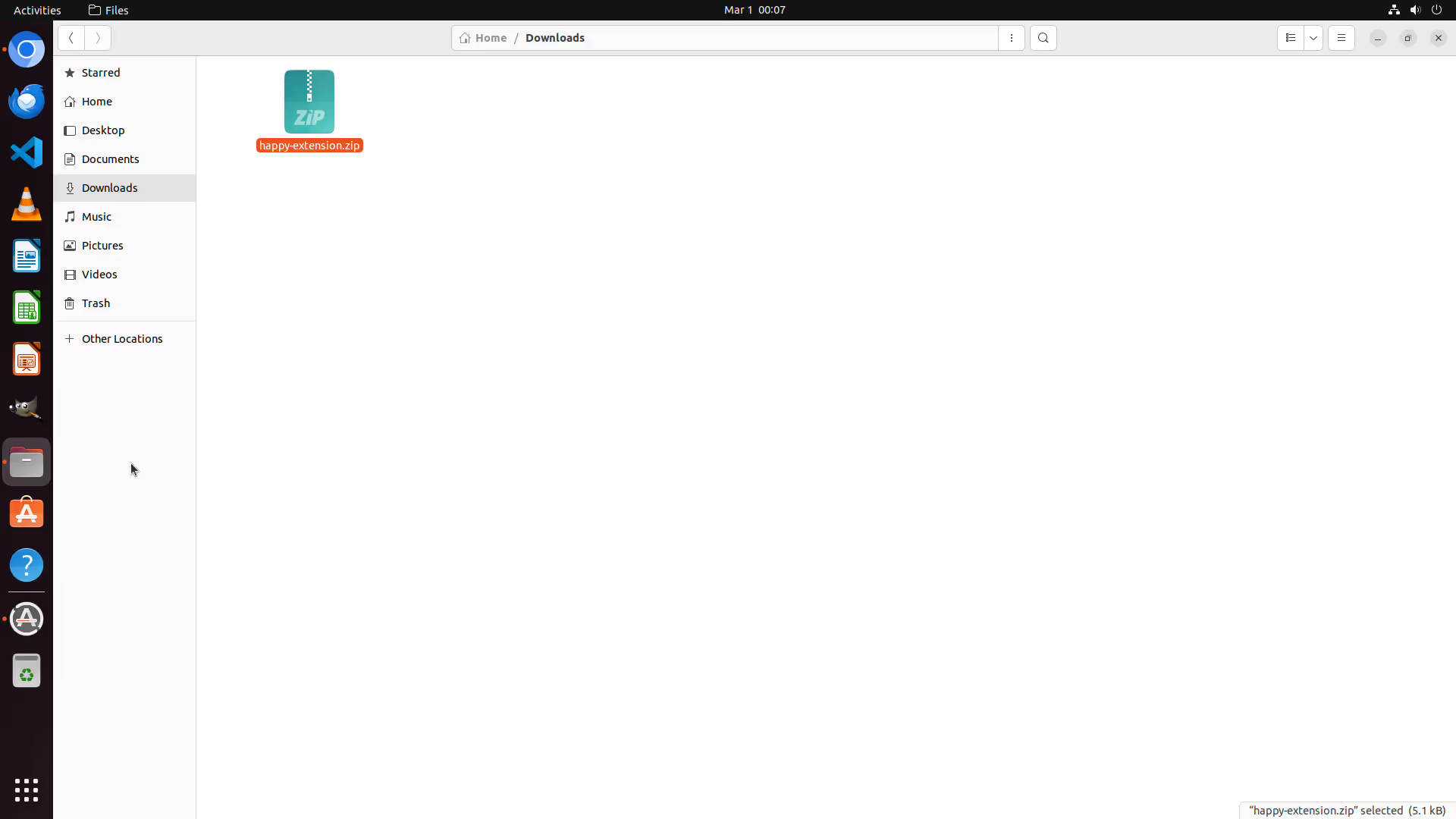

Setup Web Extension

Set up a web extension

Remove Video Subtitles

Remove subtitles from a video and export the new video

Calculate Profit

Calculate the profit in a LibreOffice Calc sheet

.webp)

Strikethrough Paragraph

Strikethrough the last paragraph in a LibreOffice Writer doc

.webp)

Agent S2 on your Smartphone

Fill forms

Task: Go to the new contact screen and enter the following details: First Name: Grace, Last Name: Taylor, Phone: 799-802-1530, Phone Label:

Work. Do NOT hit save.

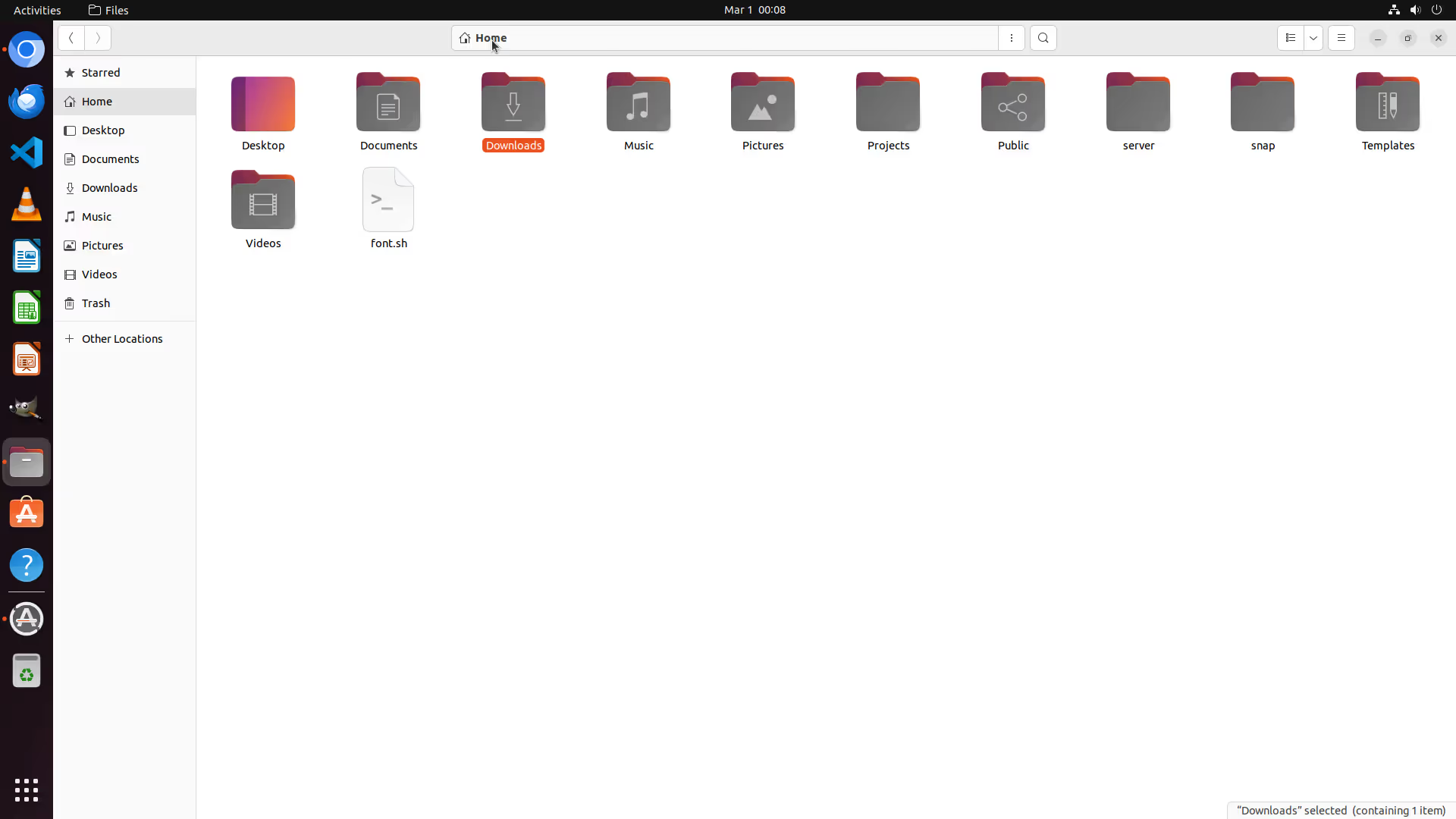

Organize files

Task: Move the file holiday_photos.jpg from Podcasts within the sdk_gphone_x86_64 storage area to the DCIM within the same sdk_gphone_x86_64 storage area in the Android filesystem.

Ready to use your

computer in a Simular way?

Shares and organize your memory, and personalize your tasks.